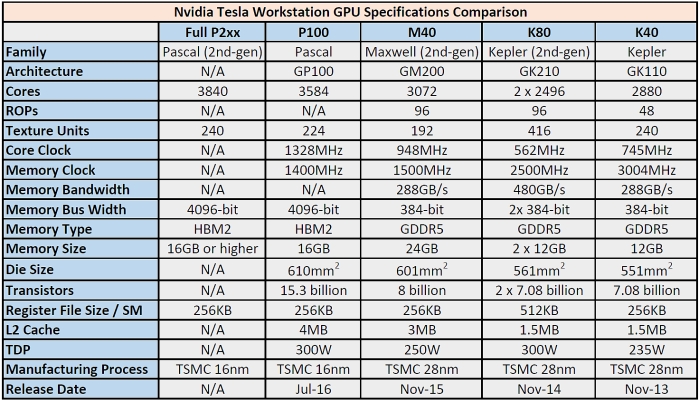

Desktop GPUs shall only be installed on PCs. Each GPU model has specific environment where it is supposed to be running. When purchasing a GPU, especially NVDIA GPU, you may need to make sure the intended use of the GPU. It is suffice to say that only GPUs with Kepler architecture or newer are capable of accomplishing the deep learning tasks, or in other words, deep-learning ready. The library itself is compatible with CUDA-enabled GPUs with compute capability at least 3.0. These methods are provided in the cuDNN library. The deep learning frameworks that rely on CUDA for GPU computing operate by invoking CUDA-specific GPU-accelerated deep learning methods to speed up the computation. The GPUs built with Fermi architecture has a maximum compute capability of 2.1, while the Kepler architecture has a minimum compute capability of 3.0. NVIDIA introduced a terminology called CUDA compute capability that refers to the general specifications and available features of a CUDA-enabled GPU. It is important to note that not all CUDA-enabled GPUs can perform deep learning tasks.

In 2022, this means that NVIDIA GPUs with the following architecture support containerization of GPU resources: Only graphics card having GPUs with architecture newer than Fermi can benefit from this feature. This utility, however, cannot be immediately usable for all NVIDIA graphics card models.

As the name suggests, the utility targets Docker container type. NVIDIA provides a utility called NVIDIA Docker or nvidia-docker2 that enables the containerization of a GPU-accelerated machine.

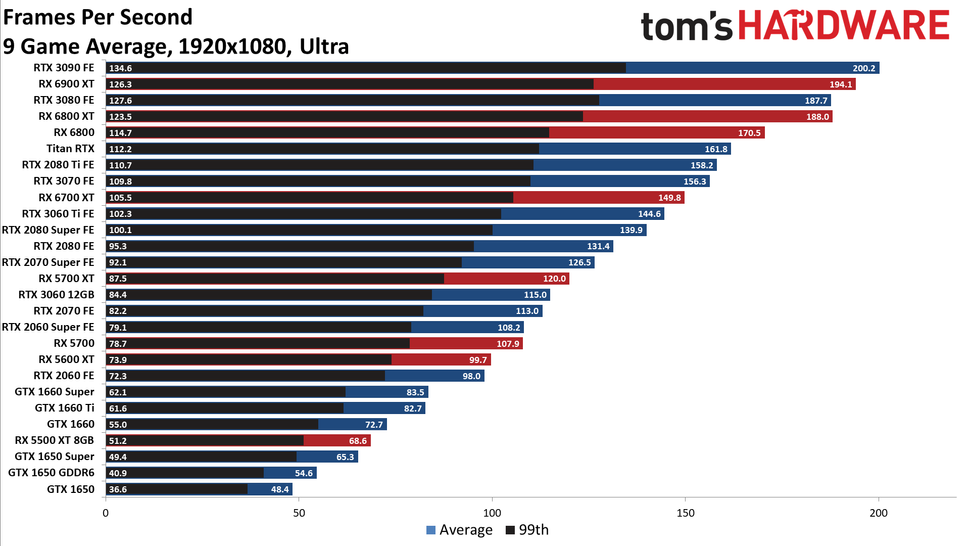

This means that that the underlying GPU resources should then be shared among the containers. With the increasing popularity of container-based deployment, a system architect may consider creating several containers with each running different AI tasks. From the frugality point of view, it may be a brilliant idea to scavenge unused graphics cards from the fading PC world and line them up on another unused desktop motherboard to create a somewhat powerful compute node for AI tasks. A vantage point with GPU computing is related with the fact that the graphics card occupies the PCI / PCIe slot. If you are doing deep learning AI research and/or development with GPUs, big chance you will be using graphics card from NVIDIA to perform the deep learning tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed